Softmax Classification¶

Note

Concepts: classification, likelihood, softmax, one-hot vectors, zero-one loss, conditional likelihood, MLE, NLL, cross-entropy loss

We will introduce classification problems and some simple models for classification.

Classification¶

Classification problems are supervised machine learning problems where the task is to predict a discrete class for a given input (unlike regression where the output was numeric). A typical example is handwritten digit recognition where the input is an image of a handwritten digit, and the output is one of the discrete categories \(\{0, \ldots, 9\}\). As in all supervised learning problems the training data consists of a set of example input-output pairs.

Likelihood¶

A natural objective in classification could be to minimize the number of misclassified examples in the training data. This number is known as the zero-one loss. However the zero-one loss has some undesirable properties for training: in particular it is discontinuous. A small change in one of the parameters either has no effect on the loss, or can turn one or more of the predictions from false to true or true to false, causing a discontinuous jump in the objective. This means the gradient of the zero-one loss with respect to the parameters is either undefined or not helpful.

A more commonly used objective for classification is conditional likelihood: the probability of the observed data given our model and the inputs. Instead of predicting a single class for each instance, we let our model predict a probability distribution over all classes. Then we adjust the weights of the model to increase the probabilities for the correct classes and decrease it for others. This is also known as the maximum likelihood estimation (MLE).

Let \(\mathcal{X}=\{x_1,\ldots,x_N\}\) be the inputs in the training data, \(\mathcal{Y}=\{y_1,\ldots,y_N\}\) be the correct classes and \(\theta\) be the parameters of our model. Conditional likelihood is:

The second equation assumes that the data instances were generated independently. We usually work with log likelihood for mathematical convenience: log is a monotonically increasing function, so maximizing likelihood is the same as maximizing log likelihood:

We will typically use the negative of \(\ell\) (machine learning people like to minimize), which is known as negative log likelihood (NLL), or cross-entropy loss.

Softmax¶

The linear regression model we have seen earlier produces unbounded \(y\) values. To go from arbitrary values \(y\in\mathbb{R}^C\) to normalized probability estimates \(p\in\mathbb{R}^C\) for a single instance, we use exponentiation and normalization:

where \(i,c\in\{1,\ldots,C\}\) range over classes, and \(p_i, y_i, y_c\) refer to class probabilities and values for a single instance. This is called the softmax function. A model that converts the unnormalized values at the end of a linear regression to normalized probabilities for classification is called the softmax classifier.

We need to figure out the backward pass for the softmax function. In other words if someone gives us the gradient of some objective \(J\) with respect to the class probabilities \(p\) for a single training instance, what is the gradient with respect to the input of the softmax \(y\)? First we’ll find the partial derivative of one component of \(p\) with respect to one component of \(y\):

The square brackets are the Iverson bracket notation, i.e. \([A]\) is 1 if \(A\) is true, and 0 if \(A\) is false.

Note that a single entry in \(y\) effects \(J\) through multiple paths (\(y_j\) contributes to the denominator of every \(p_i\)), and these effects need to be added for \(\partial J/\partial y_j\):

One-hot vectors¶

When using a probabilistic classifier, it is convenient to represent the desired output as a one-hot vector, i.e. a vector in which all entries are ‘0’ except a single ‘1’. If the correct class is \(c\in\{1,\ldots,C\}\), we represent this with a one-hot vector \(p\in\mathbb{R}^C\) where \(p_c = 1\) and \(p_{i\neq c} = 0\). Note that \(p\) can be viewed as a probability vector where all the probability mass is concentrated at c. This representation also allows us to have probabilistic targets where there is not a single answer but target probabilities associated with each answer. Given a one-hot (or probabilistic) \(p\), and the model prediction \(\hat{p}\), we can write the log-likelihood for a single instance as:

Gradient of log likelihood¶

To compute the gradient for log likelihood, we need to make the normalization of \(\hat{p}\) explicit:

The gradient with respect to unnormalized y takes a particularly simple form:

The gradient with respect to \(\hat{p}\) causes numerical overflow when some components of \(\hat{p}\) get very small. In practice we usually skip that and directly compute the gradient with respect to \(y\) which is numerically stable.

MNIST example¶

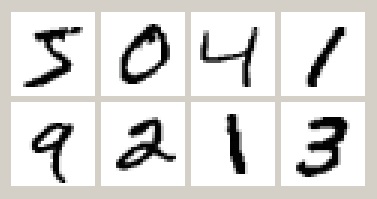

Let’s try our softmax classifier on the MNIST handwritten digit classification dataset. Here are the first 8 images from MNIST, the goal is to look at the pixels and classify each image as one of the digits 0-9:

See training-with-minibatches for more information about the MNIST task, loading and minibatching data, and simple train and test scripts.

Here is the softmax classifier in Julia:

function softmax(w,x,ygold)

ypred = w[1] * x .+ w[2]

return softloss(ygold, ypred)

end

function softloss(ygold, ypred)

ynorm = ypred .- log(sum(exp(ypred),1))

-sum(ygold .* ynorm) / size(ygold,2)

end

softmax_gradient = grad(softmax)

Let us train our model for 100 epochs and print out the classification error on the training and test sets after every epoch (see the full example in Pkg.dir(“Knet/examples/mnist.jl”)):

w = Any[0.1*randn(10,784), zeros(10,1)]

for epoch=1:nepochs

for (x,y) in dtrn # dtrn is a list of minibatches

g = softmax_gradient(w, x, y)

for i in 1:length(w)

w[i] -= lr * g[i]

end

end

# Print accuracy

end

(:epoch,0,:trn,0.1135,:tst,0.1097)

(:epoch,1,:trn,0.9008666666666667,:tst,0.9048)

...

(:epoch,99,:trn,0.9274833333333333,:tst,0.9177)

(:epoch,100,:trn,0.92755,:tst,0.9176)

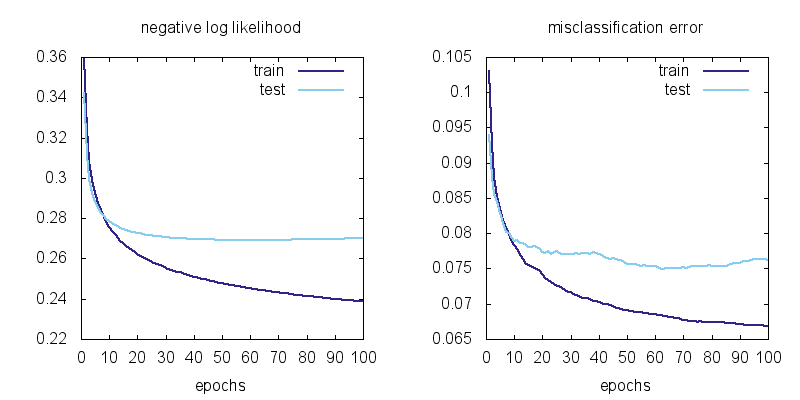

Here is a plot of the losses vs training epochs:

We can observe a few things. First the training losses are better than the test losses. This means there is some overfitting. Second, it does not look like the training loss is going down to zero. This means the softmax model is not flexible enough to fit the training data exactly.

Representational power¶

So far we have seen how to create a machine learning model as a differentiable program (linear regression, softmax classification) whose parameters can be adjusted to hopefully imitate whatever process generated our training data. A natural question to ask is whether a particular model can behave like any system we want (given the right parameters) or whether there is a limit to what it can represent.

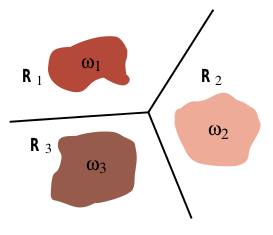

It turns out the softmax classifier is quite limited in its representational power: it can only represent linear classification boundaries. To show this, remember the form of the softmax classifier which gives the probability of the i’th class as:

where \(y_i\) is a linear function of the input \(x\). Note that \(p_i\) is a monotonically increasing function of \(y_i\), so for two classes \(i\) and \(j\), \(p_i > p_j\) if \(y_i > y_j\). The boundary between two classes \(i\) and \(j\) is the set of inputs for which the probability of the two classes are equal:

where \(w_i, b_i\) refer to the i’th row of \(w\) and \(b\). This is a linear equation, i.e. the border between two classes will always be linear in the input space with the softmax classifier:

In the MNIST example, the relation between the pixels and the digit classes is unlikely to be this simple. That is why we are stuck at 6-7% training error. To get better results we need more powerful models.